Cerebras $CBRS has managed to do something many thought was near impossible and grumbled further saying that even if they could pull it off it's not a product anyone would need. Now that everyone is focused on token generation versus training that sentiment has shifted.

Cerebras uses an entire uncut wafer to create a massive device that combines 900K cores with 44GB of on-chip memory. There are two special little pieces of magic - one is that the device allows complete interconnection between memory and computer on the wafer. The second is in the process whereby they can deal with variable yield across the wafer deactivate/ignore/route around "dead" cores.

The result, Wafer Scale Engine-3 is an engineering marvel of chip size, memory bandwidth and processing power that can "fit" a 24T parameter model right on the "chip." Once done the speed of token generation is orders of magnitude faster than conventional approaches.

Back in the old days of 2024 nobody thought this would be useful even if they could pull it off. But now that all eyes are focused on inference and token generation it's a different story.

Some staged demonstrations are part of the roadshow are designed to illustrate how fast it can be under ideal conditions. Some lead customers like Mistral and G42 are part of the early positive use cases.

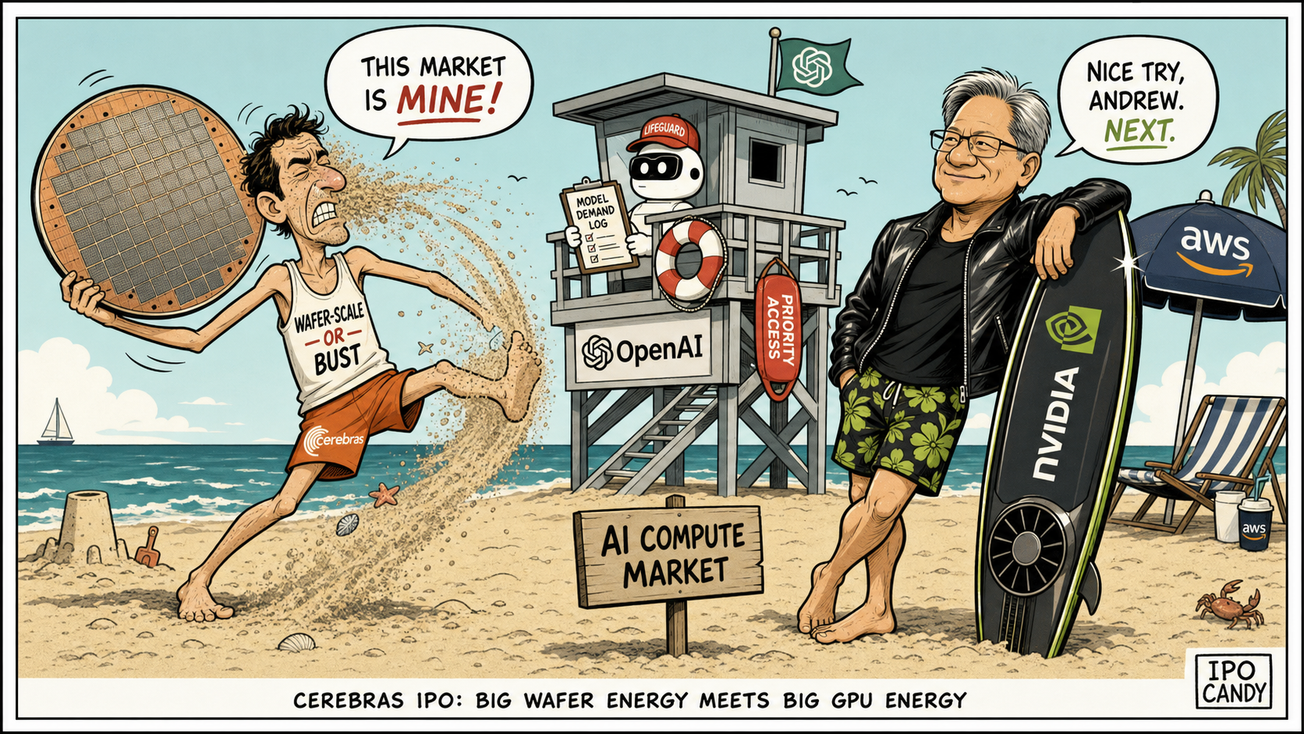

Who cares the most about rapid token generation? OpenAI! This is a new central pillar to the IPO now. Sam Altman is (was?) obsessed with the consumer AI market which is extra sensitive to response time and lower token cost. That's a perfect fit for what Cerebras can provide.

OpenAI and Cerebras signed a very large "multi-year, low-latency inference" deal to deploy up to 750MW of systems. It's specifically around "low-latency inference" and not for training. (We will be coming back to this over time.)

With OpenAI as an anchor customer/strategic investor Cerebras has some major validation. OpenAI loaned Cerebras $1B last December in exchange for warrants for 33 million shares. (One has to admire Sam Altman in terms of doing deals.)

The sentiment around these "circular" deals varies. It reminds many of the 1999 Internet Bubble but there are some major differences. It does rhyme though and if we see cracks in the capital investment and/or financing this can create ripple effects.

The net impact is industry validation, improved visibility and built-in growth. It comes at the expense of customer concentration risk that can create lumpiness, compress margins and reduce the size of the potential ecosystem for Cerebras. (Another area we will be coming back to over time.)

Niche or Not?

The preponderance of evidence points to Cerebras being a niche not a new platform component. Even a "niche" in AI infrastructure can be very significant though. Everyone is doing rather well - GPU, CPU, Memory.

When I say "preponderance of evidence" I am thinking about - upcoming release of Vera Rubin w/Grok, new emerging system architecture for inference, software optimization, ecosystem challenges and management quality/execution. Taken together Cerebras will end up "trapped" in a smaller SAM within the overall AI inference TAM.

First of all this day was coming and Jensen knew it years ago when he wanted to buy ARM Semiconductor $ARM. The commercial moment has only just arrived so competition will be increasing dramatically over the next year.

Cerebras likes to compare their performance to the GPU but that's misleading. Everyone already knows we are shifting to a heterogenous inference stack and Vera Rubin is just around the corner.

Nvidia also did a deal to license Groq's inference technology and team to deliver even better "low latency" performance. You know that Jensen and his team are going to show off some new benchmarks around inference in response!

If one only considers the hardware Cerebras will still have a "physics advantage" that Nvidia may not be able to beat in all use cases. Oh and BTW I won't cover it here but there is an "after Vera Rubin" here which is more wafer-level and in the pipe already at TSMC.

However it's not all about chips!

Inference lends itself to a different system architecture and software approach. One major theme is emerging which is a "mix of models" which allow very high performance for specialized tasks. It makes a distributed model more effective for many scenarios. This is the approach that looks more mainstream than one model/one chip.

Software optimization makes a huge difference. This is what created some of the commotion around Deep Seek when they demonstrated some surprising results from a "weak" system. The armies of software developers are just getting started in terms of building more clever systems that combine these system architectures and optimizations for inference.

Cerebras is vertically integrated, in part due to necessity based on the technology. The "chip" goes into their own system rather than to an OEM like Dell $DELL. They offer their own "Inference Cloud" but also have a good relationship with AWS who is deploying CS-3 systems via Bedrock. There's nothing wrong with vertical integration per se (Apple has done pretty well with it) but it limit the size of the potential ecosystem.

Cerebras made a BIG mistake when they publicly kicked sand in Jensen's face and positioned CUDA as now just "an API call" in the context of AI now. Jeez. Bad idea!

Investors should evaluate CEO Andrew Feldman themselves but compared to Jensen? It would have been so easy to position the company as just focused on being a great solution for low-latency inference (which it is) and not poke the bear. I think it will cost them.

Stock Conclusion

The headline market cap will be $27B at the midpoint of the $115-125 range. A fully diluted value would be closer to $36B but valuation isn't really going to be a focus on what will be a very retail driven stock.

If I think about/compare CBRS to an Astera Labs $ALAB it kind of makes sense at that level.

The OpenAI deal is said to be "worth" over $20B of revenue in the next three years. There's also a larger potential at the end with an option of another 1.25GW of capacity to take them through 2030.

So we can see a spectacular revenue ramp for Cerebras that could get them to $7B in annual revenues (if they have the capacity.) Most expect them to get to more like $2-3B in revenue given the limited execution history.

Margins are where it gets interesting. Most agree they can scale gross margins from 40-45% to 50%. Management is talking about 60% but it's just a number on the slide.

Operating margins are still negative and the headlines about them being profitable are from a non-cash accounting gain, not real earnings. It should be easy to improve from negative 30% but what to model?

With a 50% gross margin it's hard to model operating margins at 20%, let alone the 40% management speaks of, even non-GAAP. We can be very kind and say 30%.

If things go very well and they get to $2.5B in annual revenue that gets us to $750M which is pretty good but remember that's two or three years away.

At the fully-diluted midpoint the very forward PE is 50x which is again consistent with where other AI chip names are trading.

To me it all suggests that the $120 midpoint is a decent place to anchor on. The consensus in trading it to just own it and "see how high it goes" which of course nobody knows. The hype should be strong with this one.

I'd be careful though, especially as we get closer to Vera Rubin adoption. CBRS could be cut in half with Jensen press conference.